I noticed an article the other day about Monash University in Australia getting funding for further research into growing brain cells onto silicon chips and teaching them how to play cribbage.

Just kidding, the research is for teaching the modified brain cells tasks. They succeeded in teaching them goal-directed tasks like how to play the tennis-like game Pong last year. You remember Pong from the 1970s? Shame on you if you don’t. On the other hand, that means you probably didn’t frequent any beer taverns in your hometown while you were growing up—or that you’re just too young to remember.

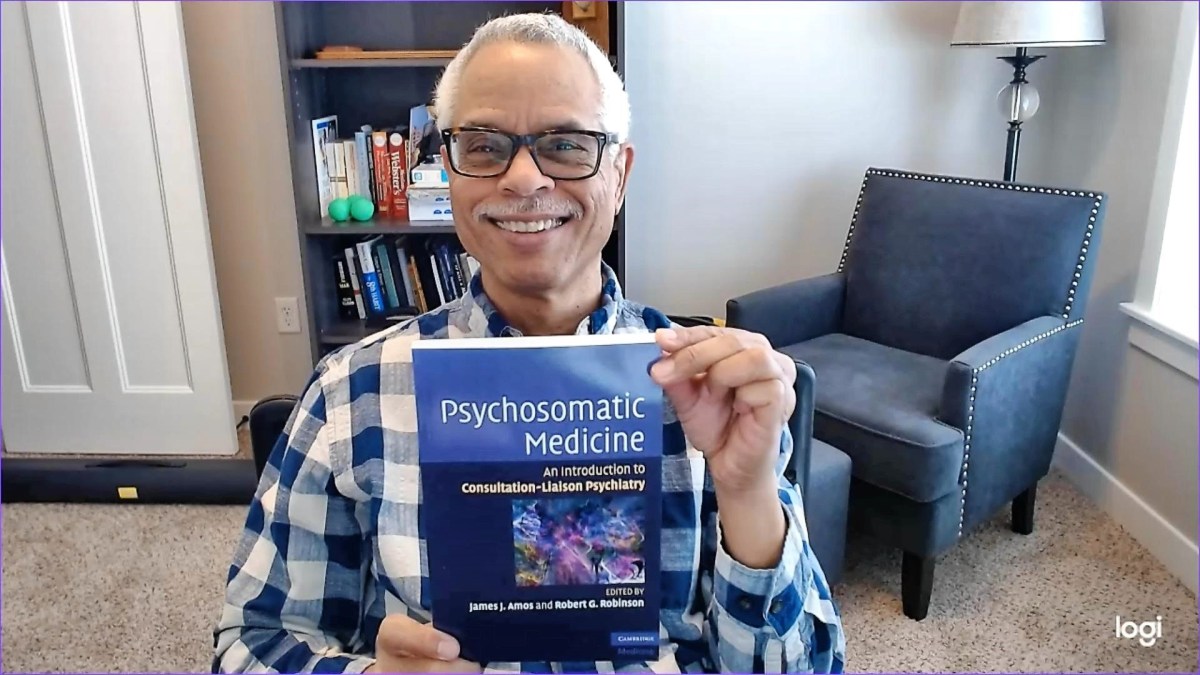

The new research program is called Cortical Labs and has hundreds of thousands of dollars in funding. The head of the program, Dr. Razi, says it combines Artificial Intelligence (AI) and synthetic biology to make programmable biological computing platforms which will take over the world and bring back Pong!

It’s an ambitious project. The motto of Monash University is Ancora Imparo, which is Italian for “I am still learning.” It links humility and perseverance.

There’s a lot of suspicion out there about AI and projects like the Pong initiative in Australia. It could eventually grow into a vast industry run by robots who will run on a simple fuel called vegemite.

Shame on you if you don’t know what vegemite is!

Anyway, it reminds me that I recently finished reading Isaac Asimov’s book of science fiction short stories, “I, Robot.”

The last two stories in the book are intriguing. Both “Evidence” and “The Evitable Conflict” are generally about the conflict between humans and AI, which is a big controversy currently.

The robopsychologist, Dr. Susan Calvin, is very much on the side of AI (I’m going to use the term synonymously with robot) and thinks a robot politician would be preferable to a human one because of the requirement for the AI to adhere to the 3 Laws of Robotics, especially the first one which says AI can never harm a human or allow a human or through inaction allow a human to come to harm.

In the story “Evidence,” a politician named Stephen Byerley is suspected of being a robot by his opponent. The opponent tried to legally force Byerley to eat vegemite (joke alert!) to prove the accusation. This is based on the idea that robots can’t eat. This leads to the examination of the argument about who would make better politicians: robots or humans. Byerley at one point asks Dr. Calvin whether robots are really so different from men, mentally.

Calvin retorts, “Worlds different…, Robots are essentially decent.” She and Dr. Alfred Lanning and other characters are always cranky with each other. The stare savagely at one another and yank at mustaches so hard you wonder if the mustache eventually is ripped from the face. That doesn’t happen to Calvin; she doesn’t have a mustache.

At any rate, Calvin draws parallels between robots and humans that render them almost indistinguishable from each other. Human ethics, self-preservation drive, respect for authority including law make us very much like robots such that being a robot could imply being a very good human.

Wait a minute. Most humans behave very badly, right down to exchanging savage stares at each other.

The last story, “The Evitable Conflict” was difficult to follow, but the bottom line seemed to be that the Machine, a major AI that, because it is always learning, controls not just goods and services for the world, but the social fabric as well while keeping this a secret from humans so as not to upset them.

The end result is that the economy is sound, peace reigns, the vegemite supply is secure—and humans always win the annual Pong tournaments.